Go-To-Market Strategies for Gen AI Large Language Models

They Consist of Integrations with 1st- and 3rd-Party Applications and Distribution Partnerships with Cloud Compute Providers

The fall and winter of 2023 is an important period for generative AI companies with foundation models to become the engine behind even more meaningful ML-enabled applications and use cases and secure significant market share.

Good distribution and access for both consumer and enterprise customers to foundation models is important to advance adoption and a lot of the recent announcements from AI companies, such as OpenAI and Google, have centered around new ways to package and distribute their models.

This article is a deep dive into the Go-To-Market strategy of some of the largest companies with generative AI foundation models, with focus on LLMs.

GTM strategies for foundation models consist of:

Integration into applications, typically targeted at consumer and SMB use cases. For example:

First-party applications, such as ChatGPT build by OpenAI and Bard by Google

AI-enabled features deployed into existing products, such as the Duet AI portfolio bringing smart tools to Google’s Workspace applications

Integration into end-to-end ML platforms and model hubs, such as Microsoft Azure AI Studio and Hugging Face

Enterprise APIs and distribution partnerships, such as the:

Recently launched GPT Enterprise by OpenAI, which enable companies to build or fine-tune custom instances of foundation models and deploy them inside the organizations

Distribution partnership between OpenAI and Microsoft to have the GPT model power 365’s AI-enabled tools

Consulting partnership between Google and Deloitte’s Generative AI practice, which utilizes Vertex AI and Google’s foundation models

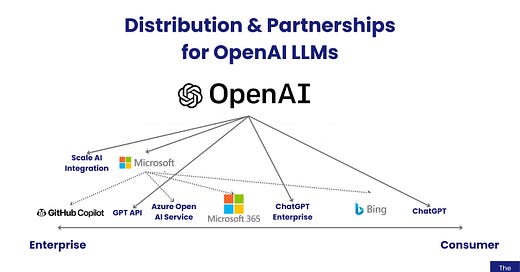

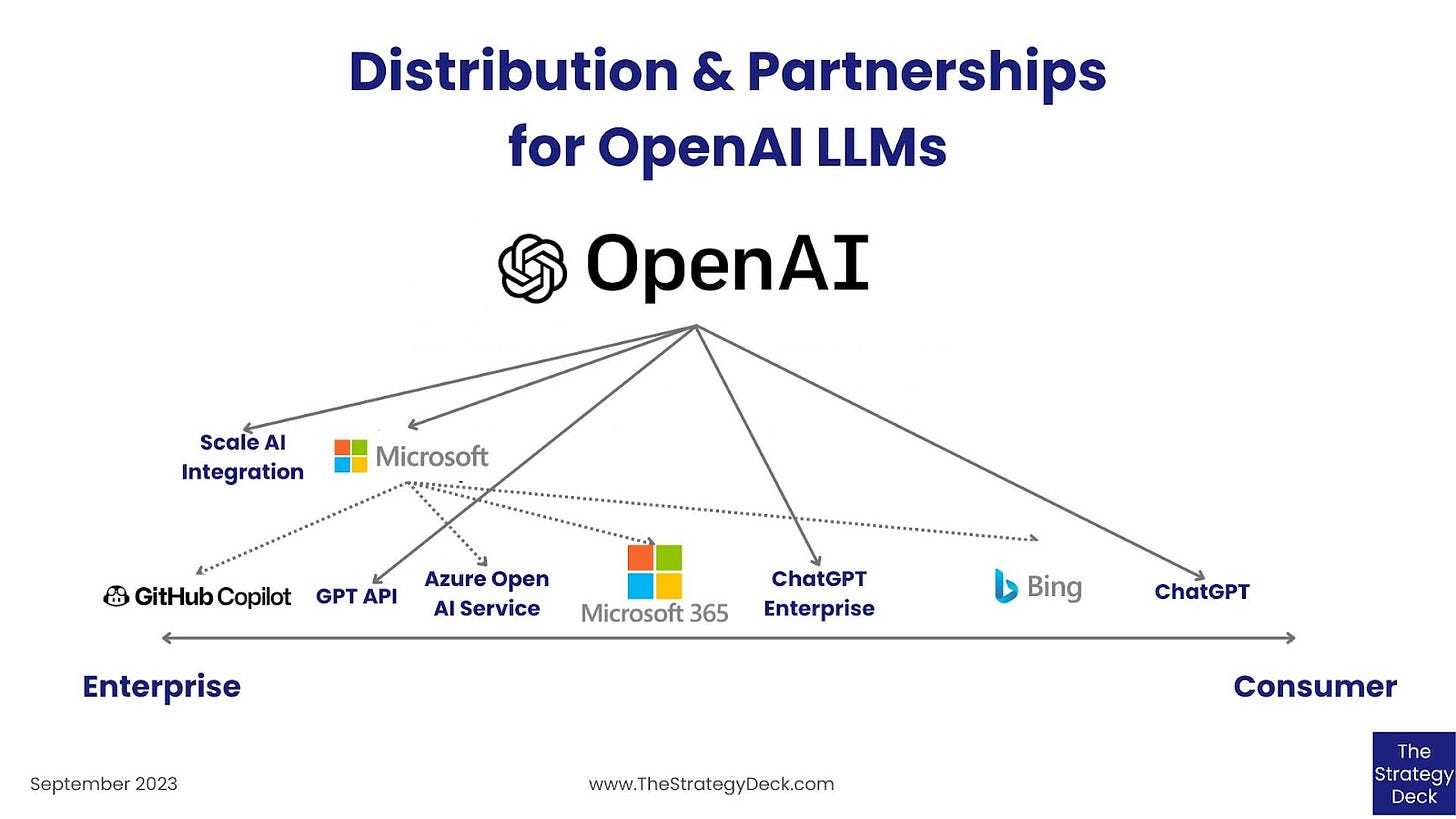

OpenAI Adopted a Two-Tiered Enterprise Distribution Strategy

OpenAI’s Go-To-Market strategy for GPT involves:

first-party applications - ChatGPT

an Enterprise version optimized for and customizable by businesses - ChatGPT Enterprise

an API which provides direct access to the catalog of GPT models

distribution partnerships with Microsoft and Scale AI

ChatGPT is a freemium product available to consumers, with a US$20 / month paid Plus subscription, which provides access to the latest version, faster response speed and beta features like Browsing, Plugins, and Advanced Data Analysis.

Similar to the Plus version for consumers, ChatGPT Enterprise comes with unlimited higher-speed GPT-4 access, longer context window of 32k tokens and advanced data analysis capabilities. Additionally, it offers essential tools for managed deployment inside companies, such as an admin console for user management, customization options, SOC 2 compliance, usage insights and shareable chat templates. Pricing for ChatGPT Enterprise is currently custom and OpenAI promises to not use the data shared with the tool for model training or fine-tuning.

The GPT API currently offers access to gpt-4 and gpt-3.5-turbo with pricing between US$0.03/1k prompt tokens and US$0.06/1k sampled tokens to US$0.06/1k prompt token US$0.12/1k sampled tokens.

OpenAI’s partnership with Microsoft spans the Azure cloud platform, GitHub Copilot, Microsoft 365 and the Bing search engine.

The Azure Open AI Studio is part of Microsoft’s AI services catalog of out-of-the-box and customizable APIs and scenario-based services, including keys for computer vision, language understanding, speech transcription, intelligent search and more. The OpenAI service provides access to 4 flavors of GPT: the 3.5 Turbo with 4k and 16k context windows and the 4 version, with 8k and 32k context windows.

Branded as “Your AI-Powered Copilot for the Web”, the newly released GPT-powered conversational Bing search experience is available through the Edge browser and on the web for consumers, alongside a Bing Chat Enterprise experience that can be accessed by Microsoft 365 business customers.

For its 365 productivity suite, Microsoft is developing generative AI-enabled features and tools under the name of Copilot which are expected to be deployed in Word, PowerPoint, Excel, OneNote, Outlook and Teams. Copilot is already available to GitHub users for assistance with writing code as a US$10 /month (for individuals) and a US$19 / month (for business users) subscription service.

GPT 3.5 is also available through Scale AI’s data and model management platform through a strategic partnership with OpenAI.

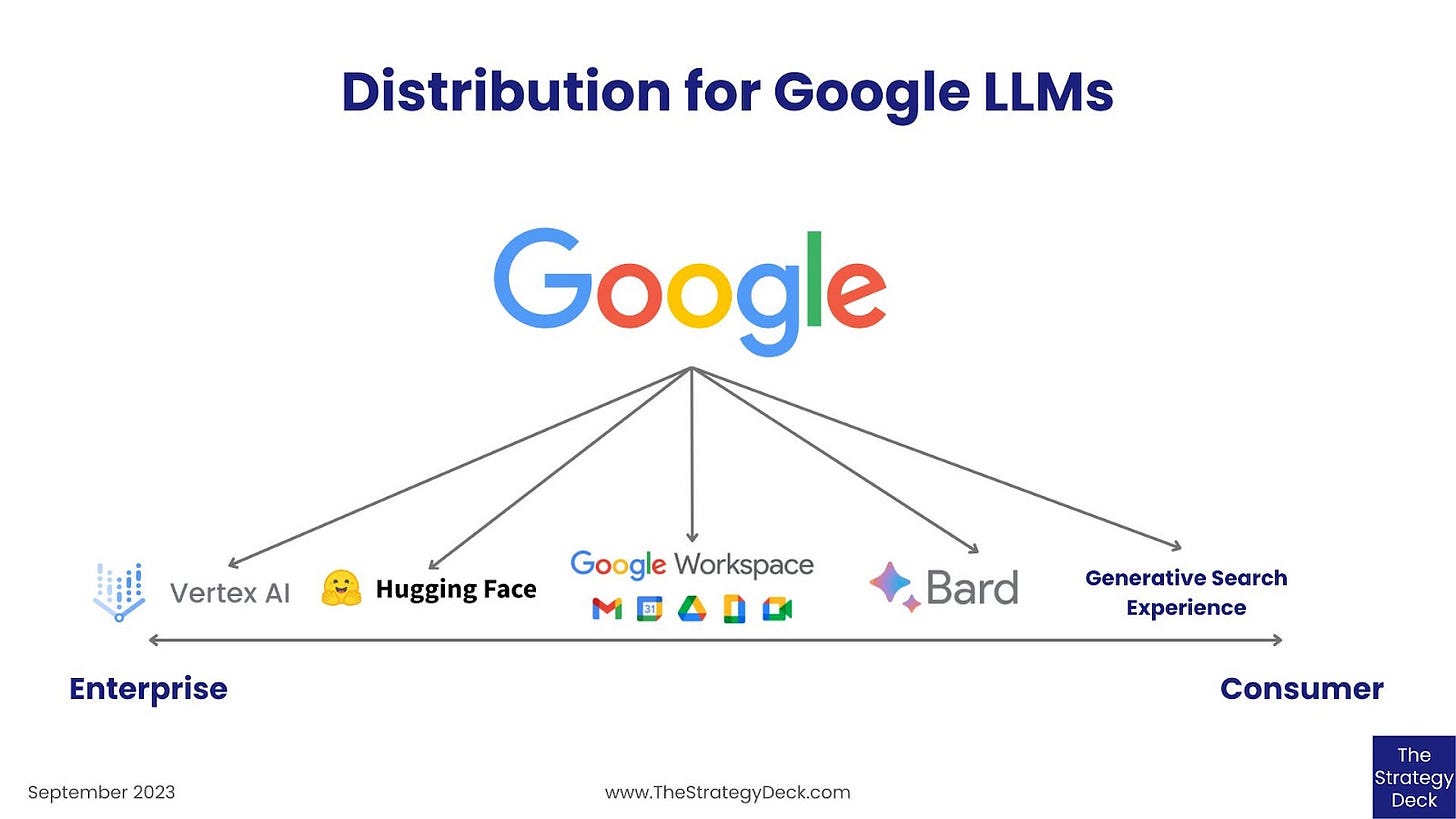

Google Distributes Its Models Through-Out Its Consumer and Enterprise Products and on Hugging Face

Google develops and maintains a variety of Large Language Models, both proprietary and open source, which has used to build products and features through-out its portfolio of consumer and enterprise applications.

For search, Google is testing a Generative Search Experience, currently available through Labs in the Google mobile app and the Chrome desktop browser. Similar to the search experience, the Bard conversational AI service is built on top of the LaMDA model and is generally available.

For consumers and businesses Google is developing Duet AI to perform a variety of functions in the various Workspace applications, such as in:

AppSheet, Google’s NoCode data app development platform, where it will enable users to build workflow automation through natural language descriptions and conversation with the AI chatbot

Gmail, to assist with email drafting, including contextual assistance to fill in names and other relevant information

Docs, to help with text writing, draft generation and proofreading

Slides, to enable image generation through natural language

Sheets, to aid in data classification and analysis and the creation of task and project planning spreadsheets

Meet, to provide the ability to take notes

For AI app development, Google makes its open source models available on the Hugging Face model hub, and all of its models on the Vertex AI tool, the End-to-End ML development platform that is part of the Google Cloud service.

Other providers of LLMs include Meta, Anthropic and Cohere and they have also secured partnerships with cloud compute providers and made their models available on a variety of ML platforms and model hubs. Specifically:

Meta’s distribution strategy for its Llama models involves their integration with all of the major cloud computing platforms and model hubs and availability on: Hugging Face, Amazon SageMaker, Microsoft Azure Machine Learning, Google Vertex AI

Anthropic recently made its model Claude 2 available through Vertex AI

Cohere started a collaboration with Oracle to train, build, and deploy its generative AI models on the Oracle Cloud Infrastructure and Oracle’s Supercluster cloud platform.

With the release of new models, such as Google’s anticipated Gemini, we are going to see even more distribution partnerships and integrations, both at the development level, into End-to-End ML platforms, as well as into consumer and Enterprise applications. The success of their GTM strategies together with the performance and adoption of their models will determine which provider will gain significant market share and capture the tremendous amount of value in the AI market.

Alex this is great. there is SO much confusion, especially around Microsoft. Our Learning Lab (zoom call) this week, one person presented their understanding and many open questions remained (recording of session here: https://www.youtube.com/@PaulBaier-GAIinsights/videos

separately, are you also by chance working on GTM visuals for AWS and SalesForce?